Notes for: A Simple Semi-Supervised Learning Framework for Object Detection

Overview

STAC:

- Semi-Supervised Learning (SSL)

- Deploys highly confident localized object pseudo-labels from unlabeled images

- Updates the model by enforcing consistency through data augmentation

Introduction to SSL

- Provides methods to use unlabeled data to improve model performance when large-scale annotated data is not available

- First provides artificial labels for unlabeled data.

- Then uses semantic-preserving methods to predict and update these labels with other labels.

- Data Augmentation

- Improves robustness of neural networks

- Very powerful for SSL image classification!

- This method is also needed in object detection problems because labeling costs are relatively expensive

Method Pipeline

-

Phased training on dataset

- Train teacher model on all available labeled data

- Train STAC with labeled and unlabeled data

- Use high thresholds to control pseudo-label quality

-

Train a teacher model on available labeled images.

-

Generate pseudo labels of unlabeled images (i.e., bounding boxes and their class labels) using the trained teacher model.

-

Apply strong data augmentations to unlabeled images, and augment pseudo labels (i.e. bounding boxes) correspondingly when global geometric transformations are applied.

-

Compute unsupervised loss and supervised loss to train a detector.

-

Train teacher model

-

Based on FASTER RCNN

-

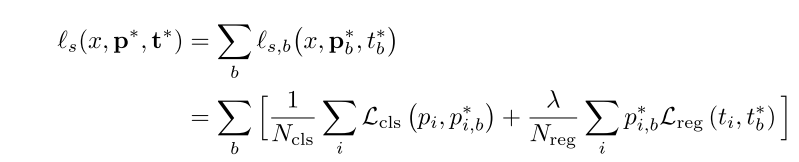

supervised loss function:

-

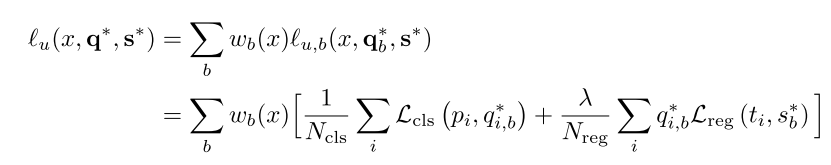

unsupervised loss function:

-

after join two function*:

-

where A is the strong data augmentation applied to unlabeled images.

-

-

Data Augmentation

-

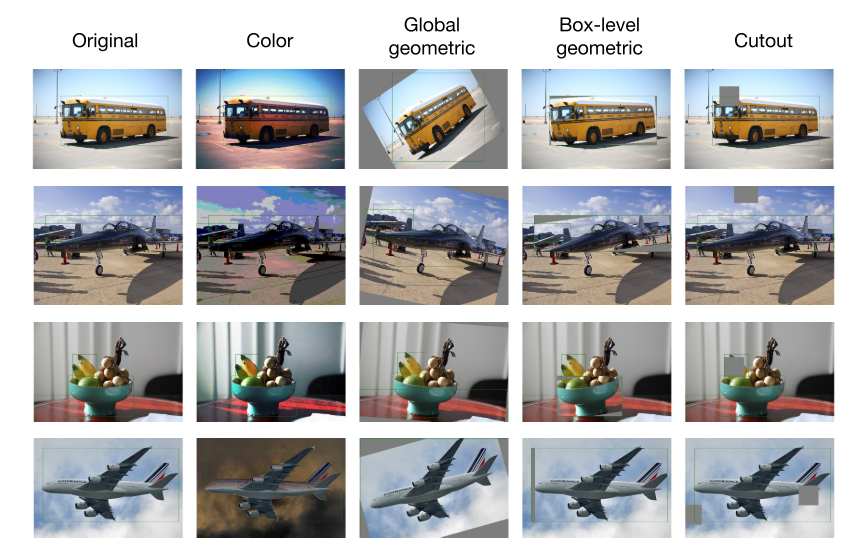

There are three types of augmentation:

- Global Color Transformation (C): Uses color transformation operations from [7] with the proposed magnitude range for each operation.

- Global Geometric Transformation (G): Uses geometric transformation operations from [7], i.e., x-y translation, rotation, and x-y shear.

- Box-level Transformation [69] (B): Uses the three transformation operations from global geometric transformation, but with smaller magnitude ranges.

-

Augmentation method:

C -> G/B -> Random Cutout

-

Details

- Based on Faster RCNN and FPN

- Uses ResNet-50

- Usage of λu and τ:

- λu: Regularization coefficient for unsupervised loss

- τ: Confidence threshold

- STAC performs best when λu=2 and τ=0.9

Although STAC can greatly improve mAP usage through pseudo-labeling, our results show that gradual improvement in pseudo-label quality may not bring significant additional benefits

Limitations

-

Insufficient input, requires higher-level labels for effective training.

-

Noisy pseudo labels can be overly used!!!

While STAC demonstrates an impressive performance gain already without taking confirmation bias [66,1] issue into ac- count, it could be problematic when using a detection framework with a stronger form of hard negative mining [47,29] because noisy pseudo labels can be overly- used. Further investigation in learning with noisy labels, confidence calibration, and uncertainty estimation in the context of object detection are few important topics to further enhance the performance of SSL object detection.

Reference

[1] K. Sohn, Z. Zhang, C.-L. Li, H. Zhang, C.-Y. Lee, and T. Pfister, “A Simple Semi-Supervised Learning Framework for Object Detection,” 2020, [Online]. Available: http://arxiv.org/abs/2005.04757.